Usability Evaluation

--for Carmel Clay Public Library (CCPL) Website

--for Carmel Clay Public Library (CCPL) Website

About our project

|

Carmel library is a local library welcoming readers from children to elders since 1904. The library website provides many features for its readers, whereas this website has some usability issue waiting to be fixed. Our team (5 HCI ms students) chose this library as our client, explored this website usability issue, and gave some suggestions.

This project period was from Aug.21 to Dec.05 (2017), spent around 50 hours. My works was conducted personal HE, WK, think aloud test, usability study and wrote the usability study protocol. |

Our findings

|

We concluded three main problems of this website:

|

|

Evaluation Method - Expert evaluation

Our team evaluated the local library website usability by using three different individual usability evaluation methods: Cognitive Walkthrough, Think Aloud, and Heuristic evaluation. We also invited 10 readers to participate our website usability test at Carmel Library.

Cognitive Walkthrough (CW)

|

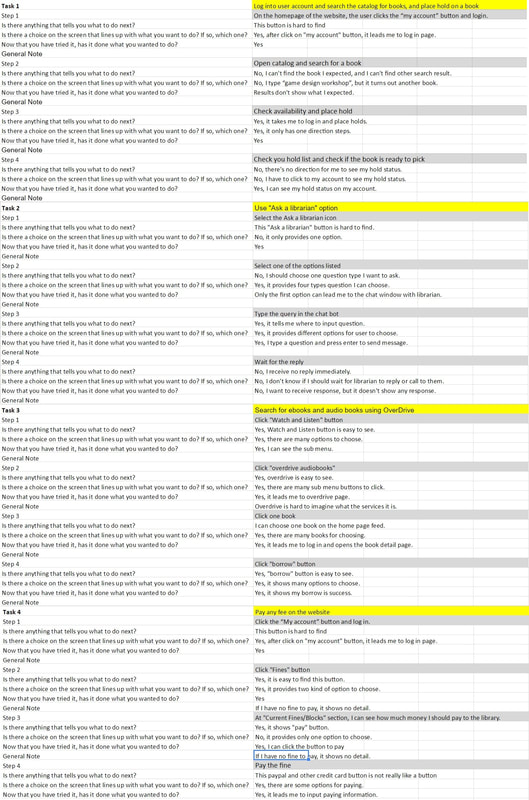

CW is task-based, one of the "expert evaluation" method, which can be used by one or several usability experts to evaluate the website or mobile application. Experts choose several tasks they are going to focus on, and evaluate each task step-by-step, deciding whether this step is success or fail.

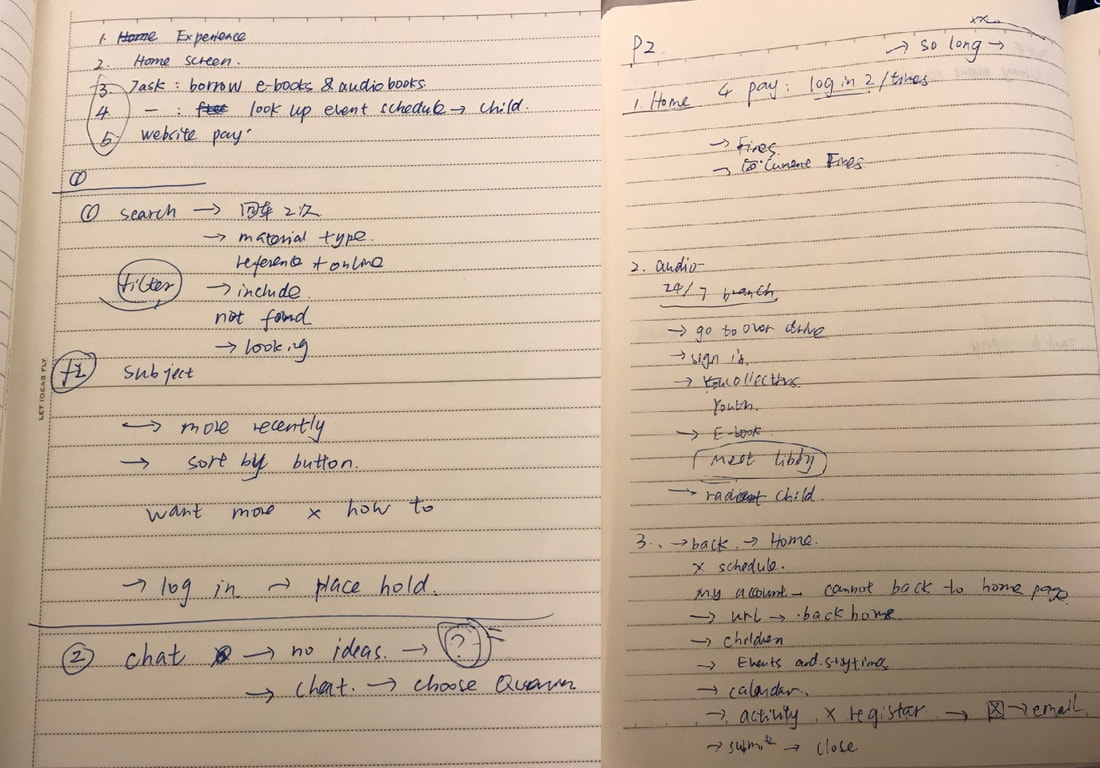

Process: To evaluate this website, we created two personas (typical user profile), two scenarios (in what situation personas use this website), four main tasks (features that personas want to use on this website), and steps for each task. We use a table to record our individual evaluation result, and discuss our results together. For each step, we answer these questions:

Result: After we discussed our results, we concluded three main problems:

|

Think Aloud Testing

|

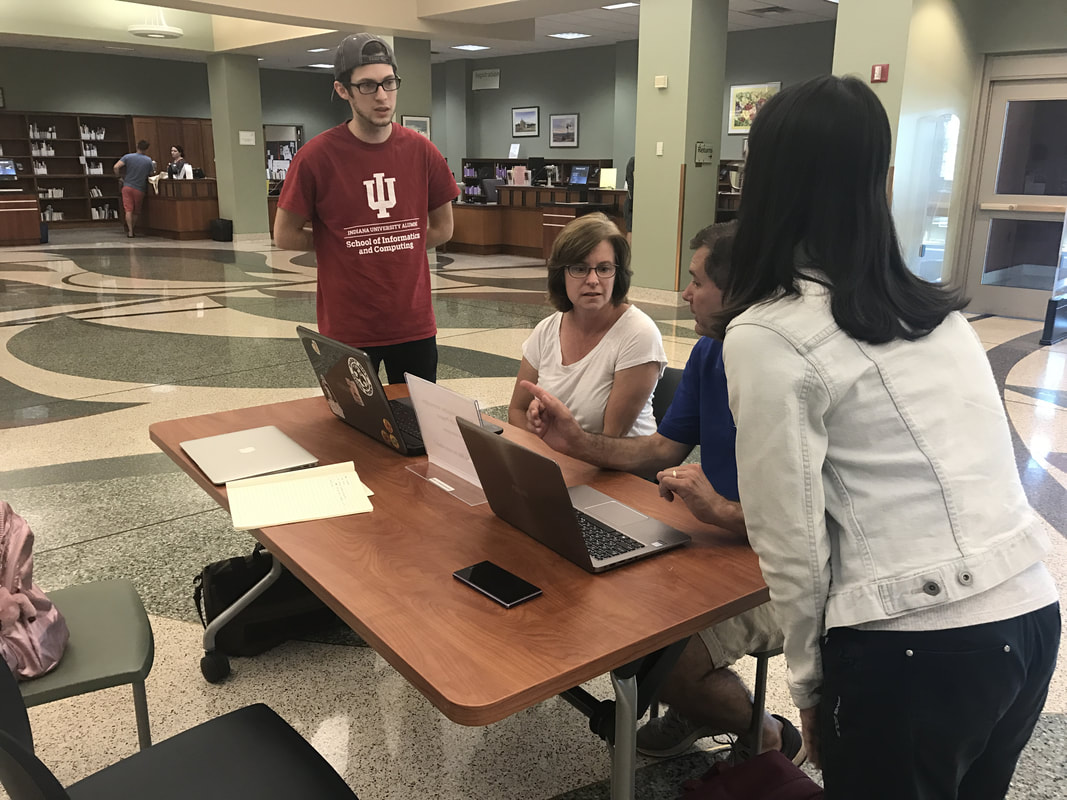

Think aloud testing is an usability evaluation method of observing user how to use this website. Observer gives tasks to users and ask them to complete, and no conversation or interruption during the testing. During the task, users had to speak out what they saw, what they wanted to do, and what they thought. This method allows observers to directly see how users browse this website, complete certain tasks, where they get confused, and where they failed.

Process: We invited two users to participate our testing at Carmel library. We gave each user one scenarios and 2-3 tasks, and asked them to complete these tasks at their best. We had one testing moderator and one note taker recording what user said and what they did. Result: After we complete two think aloud testing, we conclude two findings:

|

Heuristic Evaluation (HE)

|

Heuristic evaluation is a rule-based evaluation, which is another "experts evaluation" method, performed by one or multiple experts. They assess the entire website to judge whether it violates certain design principles, to decide the problems severity, and to give design advice.

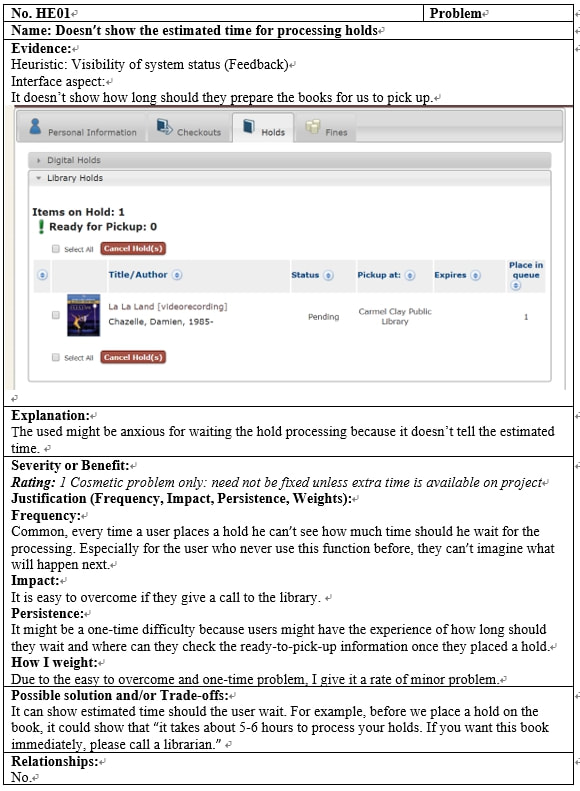

Process: Our team followed the "Nielsen’s 10 Heuristics design rules," which is a powerful design rule followed by many web/app designers. We individually evaluated the whole website and picked up five serious problems, rated it, and gave comments. Then we discussed together and picked out top 10 problems we found on this website. For each problem, we rate its:

Result: We collected our heuristics and decided the severity of each:

|

Usability Study - User reviews

Usability testing is a research process from recruit the target users, write interview script, generate several important tasks, ask users to complete those tasks in front of researchers, and record the product's usability problem.

Usability Study

|

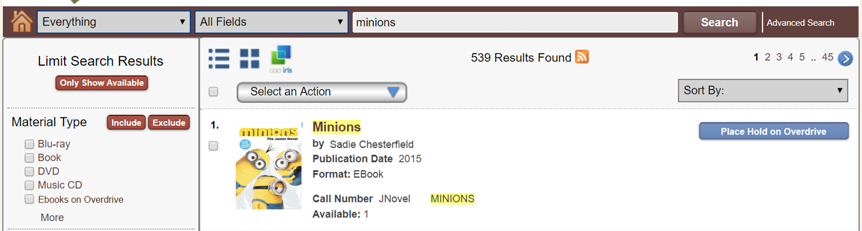

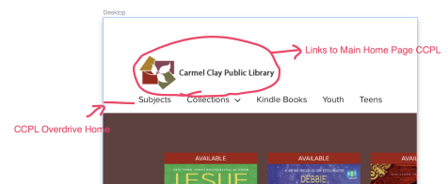

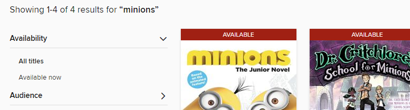

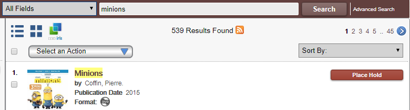

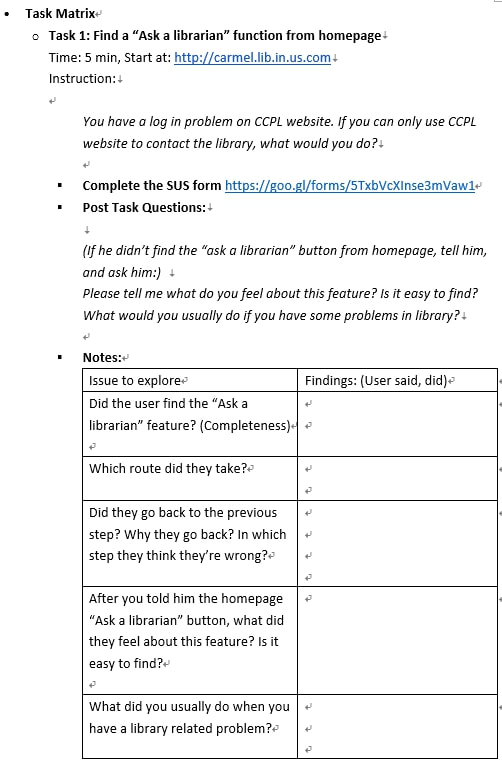

After our preliminary assessments, we addressed two main problems: information architecture problem and navigation problem. In order to verify our hypothesis, we planned one usability study, included three main tasks, which can support our hypothesis and address the website main problems, using quantitative and qualitative matrix.

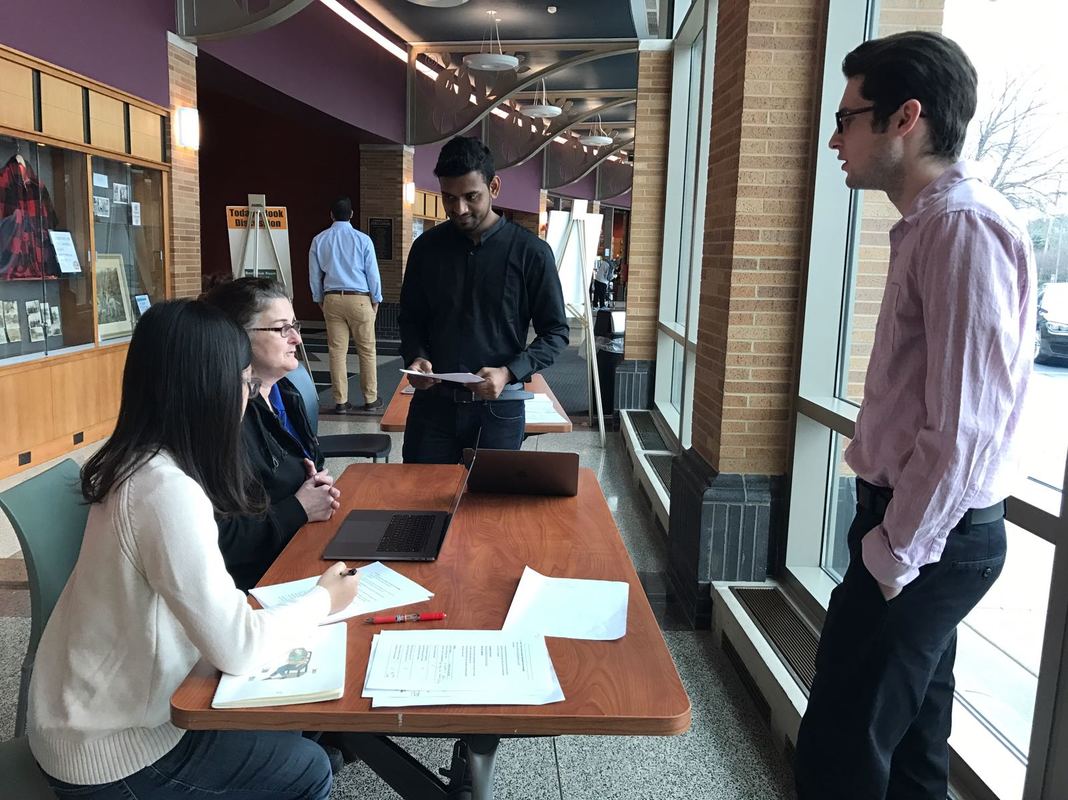

Study process: We conducted the whole usability study at Carmel Library, inviting 10 participants, each participant spend 15 minutes to complete three tasks, answer our several open-ended questions, and complete System Usability Scale (SUS) quantitative form.

Result:

|